GUEST BLOG: Modernizing mission compute -- Enabling AI through modular GPU expansion

BlogMarch 10, 2026

As embedded systems continue to demand higher performance for data-intensive workloads, system designers are under increasing pressure to add compute capability without redesigning the entire platform. Many deployed mission systems were designed at a time when deterministic signal processing and traditional image fusion dominated requirements. While those architectures continue to perform well for legacy workloads, they struggle to support today’s artificial intelligence (AI)-driven classification, perception, and decision-support algorithms at operational tempo. XMC [switched mezzanine card] modules can offer a proven, modular approach for scaling compute performance while preserving system flexibility, size, and life cycle stability.

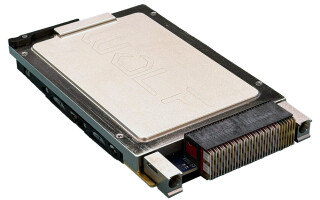

XMC modules enable designers to add specialized processing, such as GPU [graphics processing unit] acceleration, without modifying the base carrier card or backplane. By leveraging a standardized mezzanine interface, compute capability can be upgraded or tailored to specific workloads while maintaining a common system architecture. This modularity can substantially reduce development time, integration risk, and long-term maintenance costs.

XMC builds upon the traditional PCI Express bus by defining a standardized mezzanine interface through VITA 42 (XMC 1.0), VITA 61 (XMC 2.0), and VITA 88 (XMC+). This approach enables high-bandwidth expansion without requiring changes to the underlying carrier card or backplane, making XMC particularly well-suited for incremental capability upgrades in deployed systems.

In space- and power-constrained environments, XMC is a compact form factor that delivers significant compute density without consuming additional board slots. XMC sites are commonly available on 3U and 6U VPX single-board computers, enabling GPU-based XMC cards to be integrated alongside existing processing resources. This structure provides a practical way to introduce AI acceleration and parallel compute capability without the cost, schedule risk, or SWaP-C [size, weight, power, and cost] impact associated with replacing a mission computer or redesigning the platform.

Beyond compute expansion, XMC modules can be designed with application-specific I/O, enabling direct connectivity to sensors, video sources, and high-speed data interfaces. This proximity to data sources reduces interconnect complexity, lowers system latency, and improves overall efficiency –particularly for time-sensitive workloads such as signal processing, data analytics, and AI inference. As operational requirements evolve and mission demands change, the interchangeable nature of XMC modules enables system integrators to adapt capability quickly by swapping mezzanine cards rather than reengineering the system.

By leveraging XMC, system integrators can also align with modular open systems approach (MOSA) objectives, supporting easier in-field upgrades, repairs, and technology refresh. Treating compute and I/O as modular, replaceable elements enables processing hardware to evolve on technology-relevant timelines while preserving long-life platforms, unlocking new AI-driven capabilities in existing systems without full platform replacement.

A practical path to AI enablement on existing programs

Many fielded mission systems struggle to adopt modern AI capabilities – not because sensors or algorithms are lacking, but because the underlying processing hardware was specified for a different era of computing. AI classification, perception, and multisensor fusion demand orders of magnitude more parallel processing than traditional signal-processing pipelines can provide. Replacing entire mission computers or platforms to close this gap is costly, disruptive, and often impractical for deployed fleets.

GPU-based XMC modules offer a practical path to modernize processing capability within existing architectures. By introducing high-density parallel computing through a standardized mezzanine interface, XMC cards enable AI workloads to run at operational frame rates without the needing a full system redesign. This modular approach enables compute hardware to be refreshed on technology-relevant timelines – independent of platform life cycle – unlocking AI-driven capabilities from sensors already in service while minimizing integration risk and preserving long-term system investments.

GPU-based XMC modules are particularly effective for workloads that benefit from parallel processing, including real-time data analysis, signal processing, and AI-driven applications. By integrating GPU acceleration at the mezzanine level, designers can achieve near-real-time performance without increasing system complexity.

Carlie Sutherland is a marketing executive at EIZO Rugged Solutions.

EIZO Rugged Solutions · https://www.eizorugged.com/