Autonomous AI acceleration requires hardware design to evolve

StoryMarch 03, 2026

Across defense programs, autonomous artificial intelligence (AI) systems frequently stall between successful prototyping and field deployment. The limiting factor is rarely the software itself. Instead, progress slows when the underlying hardware platform was not engineered to evolve alongside rapidly changing AI workloads, sensors, and operational requirements. As the U.S. Department of Defense (DoD) accelerates its adoption of AI, platform-level engineering decisions are increasingly determining whether autonomous AI platforms can transition from experiment to operational reality.

Over the past several years, the deployment of autonomous artificial intelligence (AI) systems has become a strategic priority for the U.S. Department of Defense (DoD). Autonomous platforms are expected to sense, decide, and act independently, often in contested environments. While advances in AI algorithms and software models receive much of the attention, the practical challenge facing many programs is not whether autonomy can be achieved, but rather if it can be deployed, sustained, and iterated at operational speed.

Evolution of U.S. DoD AI policy

While the DoD and other U.S. entities have used AI on an ad hoc basis over the past 60 years, in 2018 there was a shift towards more formalized processes with the release of the “2018 DoD Artificial Intelligence Strategy.” The document outlining the 2018 strategy emphasized the need to build a centralized infrastructure for AI development, to bridge AI technology developments from the DoD’s research and engineering communities, and to exert international leadership in military ethics and AI safety.

Subsequent DoD strategies, such as the “2020 DoD Data Strategy” and the creation of the Chief Digital and Artificial Intelligence Office (CDAO), further emphasized the importance of data-centric approaches and the optimization of AI capabilities across the DoD.

The “2023 DoD Data, Analytics, and AI Adoption Strategy,” placed a major focus on speed, agility, learning, and responsibility. It emphasized decentralized authority and the creation of tight feedback loops between developers and end users, all of it aimed at enhancing the decision-making processes within the DoD. The 2023 strategy outlined a foundational, guiding approach to AI rather than a step-by-step guide.

2026 AI Acceleration Strategy

On January 12, 2026, the U.S. Secretary of Defense issued a memo outlining the AI Strategy for the DoD. The 2026 policy represents a significant shift in both the tone and the approach to the DoD’s use of AI. The earlier policy documents set broad, top-level goals like improving access to data across the entire department, cultivating a leading AI workforce, and the responsible development of AI applications. In contrast, the 2026 policy places a major emphasis on speed and “identifying and eliminating bureaucratic barriers to deeper integration, which are vestiges of legacy information technology and modes of warfare.”

In more practical terms, the 2026 DoD AI Strategy goes further than its predecessors in that it establishes seven what it calls Pace-Setting Projects (PSPs) to be initially administered by the CDAO. These PSPs will “serve as tangible, outcome-oriented vehicles for rapidly completing our buildout of the foundational AI enablers needed to accelerate AI integration across the entire Department.” For the DoD, these PSPs are also meant to establish a new execution standard for AI implementation: “single accountable leaders, aggressive timelines, measurable outcomes, and rapid iteration where failure accelerates learning and improvement.”

What acceleration means for autonomous AI systems

The 2026 DoD AI Strategy reframes speed of deployment as a requirement rather than a vague aspiration. This speed is, perhaps, most consequential for autonomous systems, where AI must operate onboard, in real time, and often without reliance on cloud connectivity or centralized compute resources.

For hardware designers and manufacturers, accelerating the speed of development and iteration introduces a fundamental tension. AI software stacks evolve rapidly as the models grow, while hardware platforms are expected to remain stable, certifiable, and deployable over long service lives. When platforms are engineered around fixed assumptions, the end product often requires redesigns, requalification cycles, and delays that ultimately contradict the acceleration goals.

Why autonomous AI stalls between prototype and deployment

Many autonomous AI prototypes are built to prove feasibility under controlled conditions. Power budgets are tightly sized, thermal solutions are optimized for known workloads, and I/O configurations are selected for a specific sensor suite. These designs may succeed in demonstrations, but they often lack the flexibility needed for deployment.

As AI software stacks mature, new requirements and operational conditions often emerge. These include things such as higher inference rates or more complex models, the addition of more or different sensors and data sources, an expanded mission scope, new accelerators or compute architectures, or increased environmental and reliability constraints.

When the hardware platform cannot adapt to integrate these changes, programs stall. Redesigns consume schedule and budget and AI software progress outpaces hardware readiness. The result: A widening gap between the capability of autonomous AI platforms and the reality of which hardware can actually be fielded.

Power and thermal headroom as enablers of speed

Designing platforms around average power consumption is insufficient for autonomous AI hardware. For one thing, AI workloads are inherently dynamic. In addition, inference patterns are not uniform and can cause compute demands to spike unexpectedly. Another factor: It is assumed that the power profiles of AI accelerators evolve with each new generation.

Conscious design decisions in the early stages of product development to increase both the power and thermal headroom of a hardware platform can provide the flexibility to meet many of the challenges to the AI acceleration outlined by the DoD. This hardware flexibility enables new models or accelerators to be introduced without redesign. Further, these buffers are able to handle the variable compute load spikes of the AI workflow without throttling performance. Perhaps, most crucially, building in this headroom from the earliest design stages reduces the need for late-stage thermal requalification or recertification.

Thermal margins in particular play a significant role: Edge systems are subjected to extreme temperatures, limited airflow, shock, vibration, and size constraints, all of which place unique demands on system design. Because external thermal conditions cannot be controlled, engineers must focus on minimizing internal heat production and maximizing heat dissipation. Localized heating from hardware accelerators can destabilize decision loops if not anticipated. Platforms that are engineered with sufficient margins from the earliest stages can evolve without sacrificing either reliability or flexibility.

Modular architectures increase adaptability and flexibility

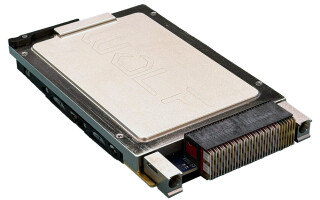

Modularity has become a critical design principle for accelerating the iterative design and development of autonomous AI platforms. Modular compute, I/O, and subsystem architectures enable platforms to rapidly adapt to hardware, software, or environmental changes. The use of modular architectures dramatically simplify aspects like sensor upgrades or compute changes without redesign or requalification.

Rather than treating integration as a one-time event, designs that use modular platforms are better able to support continuous iteration, ultimately aligning hardware development with the rapid, feedback-driven execution model emphasized in the PSPs. (Figure 1.)

[Figure 1 ǀ Mission-command hardware gets configured as part of preparations for the Next Generation Command and Control (NGC2) capability during a technical setup and integration event as part of an Army exercise in late 2025. During the exercise, soldiers integrated advanced power systems and emerging AI-enabled tools onto vehicles in support of the Army’s NGC2 initiative, which aims to deliver faster, more resilient and more data-driven decision-making on the battlefield. U.S. Army photo by Staff Sgt. Tyler Ewing.]

Rapid experimentation to production repeatability

As noted previously, autonomous AI platforms often stall in the transition period between experimentation and production. Lab platforms optimized for flexibility often lack the consistency and robustness required for field deployment, while production hardware may arrive too late to influence AI development.

To this end, the PSPs will benefit from platforms that support early experimentation with production hardware that uses standardized interfaces that persist from lab to field and manufacturable designs that scale without major revisions.

Ruggedization and flexibility as acceleration tools

Ruggedization decisions made early in the design process can substantially accelerate deployment. Early attention to features like shock, vibration, and temperature extremes; power quality and signal integrity; and mechanical and thermal robustness has the effect of reducing downstream delays caused by redesigns, recertifications, and field failures.

The DoD’s AI Acceleration Strategy sets the primary goal as speed, saying that AI capabilities must move faster between the concept and design phase and operational deployment. Ultimately, the iterative speed depends much more on the capability and flexibility of underlying hardware platform than on the AI software stack. Hardware systems built with careful attention to flexibility and modularity are much better able to evolve at the pace outlined in the DoD policy.

True speed in deploying autonomous AI systems does not come from moving faster at the software layer alone. It comes from designing platforms deliberately engineered to change, scale, and survive the transition from experimentation to operational deployment.

Drew Thompson is a technical writer and content specialist for Sealevel Systems. He holds an M.S. in global studies and international affairs from Northeastern University. Thompson can be reached at [email protected].

Sealevel Systems https://www.sealevel.com/