Data in the military metaverse: enterprise terrain management for training

StoryJuly 26, 2022

In order to create terrain for high-fidelity simulations used in military training, developers must find accurate source data, build to multiple terrain formats utilizing the same source data to support multiple runtimes, store the data, synchronize the data between customer sites, and adjust the terrain as required by the training scenario. In the past, this has necessitated complex and bespoke terrain development pipelines, and long lead times – but newer solutions are emerging.

Military forces and supporting industries need more accessible, connected, and customizable methods of leveraging terrain data. While organizations now have access to an unprecedented amount of high-fidelity terrain data, realizing the data’s full potential, means conflating, correlating, and sharing the data with all of the many interoperable systems and applications. Currently, most organizations have separate terrain data pipelines for different applications, which results in different-looking terrain in each system, adversely affecting interoperability and causing “fair fight” issues.

In a recent article in the War on the Rocks newsletter, security-technology providers Jennifer McArdle and Caitlin Dohrman presented a vision for what may become the “military metaverse” for training: That is, a persistent simulated training environment. Such a metaverse could have many useful applications, from training through to mission planning and rehearsal for real-world operations. “Under the hood” of such a military metaverse would be a mix of simulation technologies to provide the user a seamless experience for whatever purpose the metaverse may be applied. This is a step-change from today’s approach of integrating different simulation products through standard interoperability protocols. Instead, organizations can run containerized technology “on the cloud,” using a modern and open web architecture.

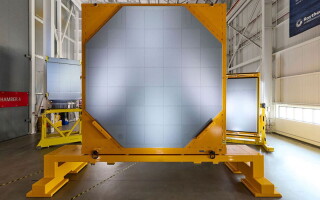

Since the 1980s, different military programs have worked to combine “models, simulations, people and real equipment into a common representation of the world.” Most recently, the U.S. Army established the Synthetic Training Environment (STE) Cross-Functional Team to create a “common synthetic environment.” The team’s goal is to converge live, virtual and constructive training through "common standards, common data, common terrain and an open architecture." A one-world terrain underpins the entirety of STE (Figure 1).

[Figure 1 | U.S. Marine reserves participate in a virtual combat simulator. Modifications to terrain in this engine must be reflected across connected simulators, such as JTAC and flight simulators. Photo by Sgt. First Class Helen Miller.]

Whatever form future military metaverses take, terrain data will be a key factor driving their success. High-fidelity terrain data will deliver a 1:1 digital twin with Earth, suitable for training in multi-domain operations (MDO), mission rehearsal, mission planning, and much more. However, today’s stovepiped and slow terrain development operations will not meet this need. Terrain source data (e.g., 3D data from on-demand drone or satellite flyovers) must be imported, enhanced, stored, and streamed to applications that need it, with updates made in hours, not months.

The challenges of generating terrain for simulation

Traditionally, terrain databases consist of numerous (100+) data formats, with dramatically different levels of fidelity depending on the target applications. A flight simulator might need terrain that is low-fidelity (e.g., a simple satellite texture overlay), while a tank gunnery trainer might need every tree and bush rendered in high fidelity. Updating terrain data can take months, and terrain databases rarely correlate perfectly, which can cause fair-fight issues in a connected/interoperable environment. There are several key challenges involved in generating terrain.

Finding accurate source data

Defense organizations have utilized COTS [commercial off-the-shelf] terrain and geospatial data since the 1990s. Pulling from multiple data sources results in a more realistic representation of the real-world terrain. By importing high-resolution satellite imagery, road and building positions, forestry data, surface definitions (grass, sand, etc.) and seasonal data, simulations can render a more accurate “digital twin” or a simulated environment that better represents the real world. Obviously, all source data needs to be precisely georeferenced, a consideration especially when custom 3D models are placed in/on the terrain.

Today’s militaries have a strong focus on multi-domain operations (MDO) that can span vast distances and incorporate land, sea, air and space forces. For example, the U.S. Space Force stated that their priorities include moving “toward a more resilient on-orbit posture” at the same time as conventional forces have focused training on traditional combined arms operations. To support the full range of MDO training, terrain databases need to provide an accurate representation of the Earth from space down to blades of grass and the ocean floor. Acquiring the appropriate source data to build such a high-fidelity “digital twin” of Earth is a difficult challenge, and updating terrain with new source data also is another important consideration.

Building multiple terrains for multiple runtimes

Modern military forces use many different simulations, and they often connect these simulations together for combined arms training. The U.S. Army, for example, has an entire program – LVC-IA – dedicated to providing “the framework for integrating the Army’s live, virtual and constructive systems into an integrated training environment (ITE).” Simulations ingest terrain data of various formats, and in order for integrated training to be successful, each simulation needs terrain data that is correlated with all of the other simulations. For example, if a flight simulator is connected to a combat simulator (Figure 2), or a Joint Terminal Air Control (JTAC) simulator, then both simulators must provide their operators with exactly the same “out of the window” view. Otherwise, the training value of the integrated system will be adversely impacted.

[Figure 2 | U.S. Army personnel conduct virtual training through the Synthetic Training Environment (STE). Photo by Staff Sgt. Simon McTizic.]

A number of products exist to build correlated terrain from source data. However, while terrain might be correlated, it still may not look exactly the same depending on the rendering engine (e.g., Unreal versus Unity) and the 3D models that are actually rendered in the scene. Generating correlated terrain that looks exactly the same across multiple rendering engines can be much more expensive and/or time-consuming and may even require custom modifications to the simulations themselves to support all the necessary terrain features (for example, underground caves, dense urban areas, or support for deep snow cover). Terrain is typically built to the lowest acceptable fidelity to ensure correlation and minimize complexity, noting that many military simulations cannot handle real-world fidelity (e.g., high tree density or megacities).

Building game-like 3D terrain

Procedural generation of terrain is a technique used to add real-world complexity and fidelity to a game world that is based upon relatively low-fidelity source data. For example, if you know the area of the terrain that is forested, you might procedurally generate the trees, bushes, and grass that populate the forest so it looks “real” in the simulation. Many modern computer games and computer game engines support procedural generation. Popular entertainment titles like “Kerbal Space Program,” “Star Citizen,” and “Elite Dangerous” use procedural generation to populate entire planets with features like trees and rocks to give players the sense of a realistic environment.

However, while procedural-generation technology has existed for more than two decades, it hasn’t been leveraged by military forces for simulations, primarily because the procedural-generation capability is engine-specific. This means that the procedural generation typically happens at runtime, just before the scene is rendered, breaking correlation with simulations that don’t support exactly the same procedural generation. The way the generation is handled greatly exacerbates the issues with building terrains for multiple runtimes. Open standards for terrain – such as the CDB standard – even go so far as to recommend not using procedural generation at all, which then results in terrains that are low-fidelity, suitable for flight and high-level aggregate constructive simulation, but unsuitable for ground-based training.

Managing terrain distribution and updates is complex

Those who use a smartphone are familiar with apps like Google Maps that stream terrain from a cloud-based server directly to our phones. Distributing terrain across military organizations is rarely this simple. Terrain files are typically many gigabytes in size and either need to be copied across the network or distributed on SSDs [solid-state drives] and, in some cases, DVDs. Despite the obvious cost in terms of time, there is also a massive issue with versioning. Users must manually delete old files and copy in new files, perhaps on hundreds or thousands of PCs. This problem is compounded by the number of different simulations being supported. The task of updating terrain across a typical warehouse-sized battle simulation center can be daunting.

Runtime dynamic terrain modification: a difficult task

While the military has successfully integrated different simulations for many years, support for dynamic terrain correlation has never been fully achieved. Obviously, military actions affect the terrain in many ways, from vehicles making tracks, artillery causing craters, or the destruction of homes and infrastructure. Ideally, these changes would be represented in all of the connected simulations. The operator in the JTAC simulator would observe the same effect on the target as the pilot in the flight simulator, even though they might be using different rendering engines to visualize the scene. This will be even more important for the military metaverse, when all connected technologies must access terrain that represents a single “source of truth.” Dynamic terrain modifications need to be stored centrally and affect all aspects – from physical simulation (like vehicles driving over rubble) to artificial intelligence (like fleeing civilian entities unable to use a destroyed bridge) to the visual scene (for example, destroyed buildings).

Addressing terrain challenges through cloud-enabled terrain management

Thanks largely to innovations in cloud computing, procedural generation, and data collection (e.g., photogrammetry), the aforementioned terrain challenges are rapidly being solved. This is critical for military metaverses – for example, the U.S. Army Synthetic Training Environment (STE) – to succeed, since high-fidelity terrain must be available on-demand to the connected applications that need it.

Good source data is now readily available, from providers like MAXAR and LuxCarta. Less than a decade ago, only satellite (or aerial) imagery was available to texture terrain for simulation. Today, MAXAR can provide 3D data for any location on the planet, LuxCarta can automatically extract data from imagery to feed into procedural generation algorithms, and OpenStreetMap provides the majority of the world’s roads and building footprints from which the smallest villages to the largest megacities can be generated to support simulated training.

New platforms like Mantle ETM are solving terrain correction and dynamic terrain problems by moving procedural generation onto the cloud and streaming 3D content directly to supported applications, such as VBS4 and games based on the Unreal game engine. Mantle ETM is based on proven COTS components and offers development/design services for creating simulated terrain for training, mission rehearsal, visualization, and terrain analysis. The cloud-enabled platform is already used within U.S. Army STE, working with many different terrain data input formats, including 3D data from One World Terrain.

Cloud computing will, of course, enable the military metaverse; at a recent U.S. Army panel on the future of the STE, Brig. Gen. Jeth Rey, Director of the Network Cross-Functional Team in U.S. Army Futures Command, made his team’s priorities clear. He said, “We are looking to move from a network-centric to a data-centric environment to support the STE … We will have to have cloud capability, AI [artificial intelligence], and machine learning.”

The road to the “military metaverse” goes through the interconnectivity of systems and big data. Cloud-capable terrain management will lead the way.

Pete Morrison is co-founder and chief commercial officer at BISim. He is an evangelist for the use of game technologies and other COTS-type products and software in the simulation and training industry. Pete studied computer science and management at the Australian Defence Force Academy and graduated with first-class honors. He also graduated from the Royal Military College, Duntroon, into the Royal Australian Signals Corp. He served as a Signals Corp Officer for several years. His final posting was as a Project Officer in the Australian Defence Simulation Office (ADSO).

BISim • https://bisimulations.com/