Supercomputers drive high-performance embedded multiprocessing

StoryJuly 25, 2011

Open standards/off-the-shelf drivers and middleware expand multicomputing architectural capability in HPCs.

Many of today’s supercomputers or High-Performance Computing (HPC) systems are based on vast clusters and arrays of the twin industry standards of Intel’s multicore processors and NVIDIA’s General Purpose Graphics Processing Units (GPGPUs). Processors and GPGPUs are scaled to many thousands of compute nodes over InfiniBand with additional connectivity via 10 and 40 GbE, providing low latency and high data throughput to support applications from scientific research, engineering, and meteorological forecasting to financial trading floors and government information processing. These systems share key architectural concepts that are supported by mainstream operating systems such as Windows and Linux plus a wealth of off-the-shelf middleware, drivers, and libraries.

Switched-fabric dilemma

Unlike the almost universal use of PCI Express for host to end-point connectivity, the choice of Interprocessor Communication (IPC) switched fabric for embedded multicomputing remains fragmented. Serial RapidIO is currently popular in the signal-processing arena, being well supported with switches and integrated processor devices by manufacturers such as Freescale Semiconductor and Integrated Device Technology (IDT). However, 10 GbE for connectivity and InfiniBand for low-latency, high-throughput IPC are the preferred technologies for commercial HPC systems. InfiniBand’s market size sustains a large ecosystem of off-the-shelf product and service suppliers. It uses 2.5 GHz signaling on bidirectional links. Data is 8B/10B encoded, giving a theoretical maximum data rate of 8/10ths of the signaling rate, plus links can be aggregated, for example, X4 or X12 for higher throughput.

Single, dual, and quad data rates are also available while fiber-optic interconnect options give even higher signaling rates with greater transmission distance. Designed for very low latency and efficient data packing, the InfiniBand specification does not define any higher levels of protocol. For HPC and general computing applications, these protocols are provided by Remote Direct Memory Access (RDMA), a unified open source, optimized software stack that operates under Windows or Linux for both InfiniBand and 10 GbE. RDMA is supported and maintained by the Open Fabrics Alliance (www.openfabrics.org), a broadly based industry alliance that has become the de facto data movement standard for HPC systems.

Compute nodes

Intel’s AVX-equipped, multicore Xeon and NVIDIA’s Tesla 20 series of GPGPU are key elements of HPC nodes, configured in massive numbers, as needed by the types of application to be supported. A GPGPU has arrays of many Single Instruction Multiple Data (SIMD) processor cores ideally suited to complex, parallel multithreaded applications; meanwhile, the latest Xeon platforms offer high-performance floating-point vector processing with one 256-bit AVX unit per processor core. Computing nodes can be mixed while the performance of the system is scaled to the application by creating fabric topologies and data pipe sizes, plus clusters and arrays of processing nodes to suit.

Deployable military applications

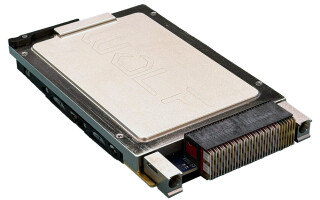

The development and deployment of advanced radar, sonar, electro-optical sensor processing, and situational awareness systems appear set to benefit from the rapid evolution of widely adopted HPC technologies. Embeddable, low-power versions of the compute nodes and other key semiconductor devices are available, able to meet the vital Size, Weight, and Power (SWaP) requirements of military platforms without performance compromise. Thus, products can now be realized in rugged formats, such as 6U VPX (VITA 46), able to survive the harshest military environments. When combined with off-the-shelf Linux, Windows, fabrics, drivers, and libraries, project development risk and timescales will be significantly reduced because of their wider commercial market usage. Additionally, future upgrade and performance enhancement paths are assured and can be brought to maturity much earlier in the technology cycle. Fully compatible with mainstream HPC concepts, the new DSP280 6U VPX multicomputer from GE Intelligent Platforms depicted in Figure 1 uses two quad-core, second-generation Core i7 devices with AVX, plus InfiniBand and 10 GbE RDMA data plane ports.

Figure 1: A DSP280 6U VPX multicomputer offered by GE Intelligent Platforms

(Click graphic to zoom by 1.9x)

The levels of performance and broad-ranging industry support for open standards, such as RDMA, plus the availability of rugged Core i7 and GPGPU products are bringing high-performance, multicomputing architectural capability in military and aerospace ever closer to its commercial HPC equivalent. The potential advantages of other fabrics or computing technologies will be offset by rapid technology evolution (perhaps also revolution) and time-to-market of new products, driven by the strength of the growing HPC market.

To learn more, e-mail Duncan at [email protected].