Enabling AdvancedTCA to support truly distributed processing applications

StoryMay 16, 2009

An important limitation has previously prevented AdvancedTCA from establishing itself even more widely as the communications architecture of choice for next-generation networks. That limitation is PCI Express control architecture definitions. However, this challenge can be overcome through an understanding of serial link technologies including the impact of PCI Express bridging and reference clock shortcomings, along with corresponding open systems standards and a universal AMC.

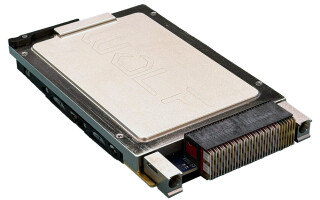

The Advanced Mezzanine Card (AdvancedMC or AMC) specifications published by PICMG define a small form factor module that is easily integrated into either a host carrier board or into a dedicated chassis (Figure 1 – see also www.picmg.org/pdf/AMC.0_R2.0_Short_Form.pdf). The AMC form factor was originally defined for deployment in NEBS-compliant telecom systems on either an AdvancedTCA carrier board or in a MicroTCA platform. AdvancedMC modules are sized to contain all of the hardware and firmware required for a system element, such as a processor node, I/O port controllers, or storage devices.

Figure 1: Simplified AdvancedTCA architecture: Each AdvancedTCA board can be given a unique "personality" dependent upon the AdvancedMC module mounted on it.

Simple engineering rules define aggregation of AdvancedMCs into larger physical and functional system architectures. However, when deploying AdvancedMCs in some applications, including communication gateways, PCI Express control architecture definitions present challenges. An examination of serial link technologies – including the impact of PCI Express bridging and reference clock limitations plus corresponding open systems standards – is presented. Meanwhile, a Universal AMC is key.

The roles of serial link technologies

The AMC specification suite includes definitions for high-speed serial interconnect among modules using multiple serial link technologies, including Ethernet, PCI Express, and Serial RapidIO. Industry practice tends to assign application or user plane data traffic to the Ethernet or Serial RapidIO infrastructure and local control plane traffic to the PCI Express infrastructure.

Ethernet and Serial RapidIO network definitions are based on a loosely coupled architecture model, where each node in the network has the resources required for its assigned function, and data traffic on the network is confined to application data packet transfers.

The PCI Express control architecture definitions are carried forward from the PCI local bus specifications originally published in the 1990s. This has permitted evolution of hardware platforms for application software designed to utilize PCI resources for data transfers. Under these definitions, one node is defined as the Root Complex and contains a host processor plus software to manage all other nodes (called End Nodes) on the shared bus. The host processor is assumed to have complete control over all of the resources at each target node, and the bridging interface between the host processor and an End Node is called a Transparent Bridge (TB), as depicted in Figure 2.

Figure 2: When transparent bridges are powered on, they learn the topology of the network by analyzing the source address of inbound frames from all attached nodes.

In contrast to Ethernet and Serial RapidIO, PCI Express interconnect is based on a tightly coupled network model where the host processor at the Root Complex is enabled to directly access and utilize all resources at intervening switches and at each End Node. The host processor is the master of the physical interconnect and controls all data transfer operations; the other nodes in the network are functional slaves and respond to commands issued by the Root Complex. Further, the host processor expects to have direct control over all hardware resources in the End Nodes.

While this architecture is very efficient in traditional PCI environments such as ATX motherboards, it does create significant restrictions for application platforms that require embedded processing nodes with local memory or I/O resources at End Nodes in a distributed processor architecture. An understanding of PCI Express’s limitations and composition is necessary to remedy the dilemma.

PCI Express interconnect architecture limitations

PCI Express serial link specifications published by the PCI Special Interest Group (PCI-SIG) provide detailed descriptions of the voltages, line coding, embedded reference clock definitions, and other electrical attributes of these serial links. All PCI Express serial link configurations are defined as bidirectional connections using one or more pairs of unidirectional serial links. PCI Express interfaces at plug-in modules may optionally include a 100 MHz reference clock, which is used for enhanced link synchronization purposes and in spread spectrum environments.

Importantly, the original PCI (and PCI Express) specification did not foresee any requirement for multiple processors to exist within the same PCI domain, so provision for this capability was not made. In order to support these distributed processing architectures, each application processor node includes local memory and peripheral resources that the application processor needs to operate autonomously without interference from the host processor.

The Non-Transparent Bridge (NTB) was introduced by silicon vendors to address these platform architectures. At an NTB, the host processor is assigned a small shared memory window at the applications processor, which contains both hardware registers and a small amount of physical memory. The host processor and applications processor interact with each other using data block transfers through this memory window, following a specified exchange protocol defined in the PCI specifications. More importantly, the host processor has no knowledge of, or access to, any other resources at the applications processor. Typical architectural design practice is to provide an NTB at the embedded application processor node rather than at the host processor node (Figure 3).

Figure 3: NTBs are provided at the applications processor node to shield local processor resources from the host processor.

Support for embedded applications processors at End Nodes was addressed in PICMG for CompactPCI systems with the introduction of specifications supporting nontransparent bridging on the backplane CompactPCI bus. However, that effort was not extended to encompass the PCI Express infrastructure in the AdvancedTCA/AMC domain.

AMC.1 module definitions: To the rescue?

The PICMG AMC.1 specification provides signal assignments for the optional 100 MHz PCI Express reference clock. In typical platform applications, each PCI Express AdvancedMC module receives the reference clock from a central clock synchronization circuit. However, the AMC.1 specification does permit the primary Root Complex AMC module to source the reference clock as the master of the PCI Express interconnect. Management configuration options for the reference clock are limited to interface enable/disable.

The PICMG AMC.1 specification also defines assignment of PCI Express serial links on the AdvancedMC module as well as some host system interconnect requirements. The AMC.1 specification allows the assignment of one x8 PCI Express serial link to AMC “fat-pipe” carrier ports 4 to 11 and includes definitions for managing this interface by the platform’s Shelf Manager through the module’s Module Management Controller (MMC) on the AdvancedMC module. In standard AdvancedMC host systems, this PCI Express link is connected to one port on a central switch located on, for example, the MicroTCA Carrier Hub (MCH) in a MicroTCA platform. Management configuration options for the serial link are limited to interface enable/disable operations and lane configuration. All configuration operations are implemented by the MMC prior to enabling power for the application circuitry on the AdvancedMC based on configuration data received from the platform’s Shelf Manager.

Transparent bridging is assumed for the Root Complex and all End Node AdvancedMCs, as well as at all switch port interfaces. The AMC.1 specification does provide an option for nontransparent bridging at the central switch for an interface port that hosts the secondary Root Complex. However, there is no functional specification or MMC configuration mechanism defined for providing nontransparent bridging at an End Node containing an embedded processor circuit.

Bridging the gap

Work done by GE Fanuc addresses both the bridging and reference clock configuration shortcomings of standard AdvancedMC modules, defining a serial link bridging function and a reference clock interface that is configurable by the MMC during power-up initialization of the AdvancedMC module. The AMC.1 Universal AMC PCI Express interface architecture defines a serial link bridging function and a reference clock interface configurable by the MMC during power-up initialization.

A universal bridge is included with the PCI Express port controller, and this may be implemented at the processor core or on a System-on-Chip (SoC) device that includes the processor itself, a memory controller, processor peripheral resources, and other I/O port controllers. Alternatively, the bridge function may be provided on a discrete device, such as a port switch.

In either configuration, the bridge is configured in transparent or nontransparent mode using inputs controlled by the MMC before power is applied to the processor core during hardware initialization. As defined in the AMC.1 specification, Root Complex AdvancedMC modules are always defined to have a transparent bridge, with nontransparent bridging for the secondary Root Complex module managed at the central switch. Storage or I/O End Node AMC modules controlled by the Root Complex typically do not include a bridge. End Node AdvancedMC modules with an embedded processor do have an NTB.

A bidirectional PCI Express reference clock interface is included in the GE Fanuc interface definition. This interface is configured to be either an input from the carrier or an output to the carrier, depending on the overall reference clock architecture in the host system. The secondary Root Complex AdvancedMC module and all End Node AMC modules are configured to receive the reference clock. The primary Root Complex AdvancedMC module may be configured to either source the reference clock or receive it from a separate clock synchronization circuit.

These definitions are implemented on the GE Fanuc ASLP11 Processor AMC, and are provided to the MMC by a Shelf Manager during the initialization sequence using standard module management operations (Figure 4). The MMC deployed on the ASLP11 includes firmware to receive configuration data pertaining to these functions from the platform Shelf Management Controller (ShMC) and set appropriate hardware resources during the module’s hardware initialization.

Figure 4: The AMC.1 Universal AMC PCI Express interface architecture defines a serial link bridging function and a reference clock interface configurable by the MMC during power-up initialization.

It can be done

AMC host system architectures based on PCI Express switch fabrics use the PCI Express infrastructure to transfer control data, application data, or both types of data between tightly coupled nodes in the host system. However, the PICMG AMC.1 specification does not address the bridging and clock issues that need to be resolved if the requirement is to deploy a truly distributed processing platform. The AMC.1 Universal AMC module alleviates these issues with its universal bridging and reference clock management infrastructure.

Chris Eckert is responsible for advanced product definition of processor boards and SBCs for GE Fanuc Intelligent Platforms, where he is senior system architect and principal engineer. Chris, a registered Professional Engineer, has developed board- and system-level products for a wide range of military, avionics, communications, and industrial control applications during the past 25 years. He holds a BS in Computer Engineering from Iowa State University and an MSEE from Duke University. He can be contacted at [email protected].

GE Fanuc Intelligent Platforms

800-433-2682

www.gefanucembedded.com