Can bio-science grow in military applications?

StoryJune 21, 2010

Some ideas for civilian COTS instruments with DoD applicability.

A recent FORTUNE Magazine article described Hewlett-Packard’s (HP’s) Central Nervous System for Earth (CeNSE) network consisting of a trillion tiny Micro-electromechanical Systems (MEMS) scattered around the Earth. The tiny sensors, bundled with wireless transmitters, are envisioned to collect vibration and motion in soil, on buildings, and in any geographical location where scientists want to collect data. Petrochemical companies (such as Shell Oil) and seismologists looking for the next Big One have expressed interest in these sensors, cheaply manufactured in huge volumes originally for HP’s inkjet printers. One can easily imagine use in military applications for monitoring runways, no-go interdiction zones, or in perimeter defense – a smaller and less costly alternative to radar, IR, or even CCTV sensors, which all require operator intervention or sophisticated embedded computers to interpret sensor events.

So I got to thinking: Like the HP MEMS, can COTS bio-sensors benefit the warfighter or DoD, or find utility in any homeland security application? I’m not sure, but here are some examples of the “precision plant measurement” instruments manufactured by Camas, Washington-based CID Bio-Science (www.cid-inc.com).

Leaf area meters

Look out your window and you’ll see that leaves vary in size, shape, thickness, and color. And thinking back to your high school biology class, you’ll recall that a plant’s leaves convert sunlight into food energy while emitting healthy oxygen back to the environment. When determining a plant’s overall bio-structure and health, scientists need to know the total area of a plant’s leaves. But what on Earth would you measure that with?

CID’s handheld laser leaf area meter and similar (guts-wise) portable laser leaf area meter use a 650 nm laser diode scanner element to easily identify and calculate leaf area (versus the non-leaf background). For the portable device, simply pulling a leaf through the handheld wand measures area, width, perimeter, shape factor1, and aspect ratio. Resolution up to 0.025 mm2 at +1 percent with a scanning rate of 127 mm/s (palette version) or 0.01mm2 at 200 mm/s (wand) provides reasonably high-resolution images and area accuracy of fine-veined leaves and even evergreen needles.

How might these battery-operated instruments be used in military applications? When I asked the engineers at CID, they really had no idea. (They’re biologists after all.) But while perusing the DARPA website, I saw a number of R&D sensor programs that seem related to this kind of instrument. For example, sensors that monitor a soldier’s or Marine’s health might measure hair thickness or skin surface area. It’s possible that measuring the amount of Nuclear, Biological, Chemical (NBC) agent on the skin could give a prognosis to those exposed to hazards. A leaf area sensor could easily be used to measure body hair or skin – and feed that information in as part of an overall prognosis.

As well, these instruments can measure any kind of area “stain” – from blood or chemical residue on a briefcase to oil washed onshore and clinging to saw grass in the Gulf of Mexico. And it seems to me that cheaply measuring ammonium nitrate residue on objects might provide advanced warning of bomb threat material. Sure, fancy spectrographic analyzers and gas chromatographs easily provide chemical concentration charts, but they aren’t handheld and surely aren’t cheap.

Digital root imager

A derivation of the leaf imager, the in situ root imager slides into a clear tube in the ground and non-destructively measures plant roots and other buried objects. For botanists, this application showcases a plant’s health by examining the root structure without having to yank the plant from the earth. The 360-degree scanning sensor records full-color images at 1,200 dpi, with a 21.6 x 19.6 cm image containing up to 188 million pixels.

In military applications, one could envision using a similar application to look for visible objects in soil, liquid, or other hard-to-reach places where a low-light video image would record results poorly versus a laser-scanner-based imager. As before, ground-penetrating radar or sonar also provides non-destructive (though only indirectly representative) object images. But portability and sheer low cost might make the digital root imager much more appealing and deployable to warfighters hunting for IEDs or other paraphernalia.

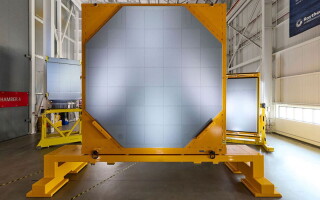

Digital plant canopy imager

This last device is pretty unique, though I struggle to find direct applicability in military applications. Using an upward-looking Pentax fish-eye 150-degree wide-angle lens on a simple freewheeling gimbaled boom-based video camera at 768 x 494 pixels, sophisticated software interprets overhead forest canopy images and calculates the area of coverage and other biological metrics including Leaf Area Index (LAI) and Photosynthetically Active Radiation (PAR) levels. I think the magic here is the COTS software that digitizes and manipulates captured images based upon a simple azimuthal and zenith angle entered by the operator. Sunshine that makes it through is counted as “not canopy,” and color shades can be interpreted as vertical canopy density. The gap-fractional inversion procedure algorithm is at the heart of this system, providing an amazingly accurate estimate of canopy coverage2.

How might this apply to defense applications? Looking for patterns in clouds or aerial formations perhaps? Passively estimating vertical distances without signal emanation? Performing ceiling tile reconnaissance? I’m interested in your ideas about these three technologies – and I’ll publish them. Let me know.

Chris A. Ciufo, Editor [email protected]

1 Shape factor = ratio of area to perimeter. Aspect ratio = ratio of length to maximum leaf width.

2 Courtesy info from CID: J.M. Norman and G.S. Campbell (1989) Canopy Structure. In: Plant Physiological Ecology: Field methods and instrumentation. (eds. R. W. Pearcy, J. R. Ehleringer, H. Mooney, and P.W. Rundel), Chapman & Hall, London and New York, pp. 301-325.