Clustered GPUs closing in on supercomputer performance

StoryMay 31, 2010

Pairing multiple GPUs with a single CPU creates offers even greater performance than the typical one-to-one relationship between the two.

The potential of high-end Graphical Processing Units (GPUs) to efficiently process complex, real-time sensor image and video data has been recognized for some time. The introduction of GPU-appropriate C-based computing languages such as OpenCL (Khronos Group) and CUDA (NVIDIA) gives the programmer access to arrays of processing cores within each GPU, offering typically 500 GFLOPS of performance to apply to highly parallel, data-intensive algorithms. Combining multiple GPUs into computing clusters – using industry standard, open architecture formats – will provide TFLOP performance levels for rugged, embedded sensor processing applications such as multisensor, multiplatform tactical battlefield surveillance.

The tried-and-trusted solution for complex sensor processing is a heterogeneous processing configuration based on an FPGA front end, plus a multicomputing array of vector processors, such as Freescale’s 8640 Power Architecture. Using an FPGA front end is the ideal solution with its flexible high-speed I/O signals to interface to the sensor, plus it can be relatively easily applied to repetitive, parallel algorithms such as filtering and data reduction. For ultimate real-estate efficiency, multiple ASICs or FPGAs have been used to replace vector processors. However, this approach has proven to be time consuming to develop, test, and verify. Additionally, the promise of FPGA reconfigurability has never truly been realized, making overall life-cycle costs and upgradeability prohibitively expensive for any but the most generously funded projects. Much of this difficulty with portability and maintainability can be attributed to FPGA code being a specific hardware description of the problem to be solved. This code often incorporates third-party cores and logic optimizations to achieve reliable operation over temperature extremes.

Software holds key to portability

CUDA and OpenCL provide abstracted, multithreaded computing models, coded in C with extensions to suit a GPU-specific hardware architecture. As a result, the major manufacturers of GPUs, such as NVIDIA and ATI (AMD), do not need to release detailed hardware descriptions of the processor-core arrays in each device as these will change as devices evolve. Currently, each processor core is a very fast, single-precision math engine much simpler than a general-purpose processor. It has no register set as such, no predictive logic, and no pre-fetch capability, but is designed to be constantly fed with data and instructions. A typical GPU architecture uses multiples of these cores. (In the case of NVIDIA, these are referred to as CUDA cores.)

Eight cores form a group with common register sets and memory, known collectively as a streaming multiprocessor. This streaming multiprocessor runs a simple kernel to dispatch operands and organize results from the cores. Each GPU has many such multiprocessor groups. Multithreading is inherent within this type of architecture as individual cores are only required to perform a math operation on whatever data is presented to them, which can be from a different thread from cycle to cycle. Multithreading is transparent to the programmer, the compiler, and development tools dividing tasks among streaming multiprocessors to make the most efficient use of available resources.

General-purpose host CPU

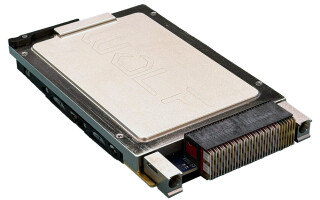

OpenCL and CUDA require a general-purpose CPU to host the application and dispatch tasks to a GPU. This is typically based on the desktop PC architecture, using the GPU as a math accelerator in addition to its primary role as a graphics device. This basic CPU/GPU configuration is mirrored by many off-the-shelf, embeddable PC products in formats such as CompactPCI or VPX (VITA 46), some of which are available in ruggedized form for deployment in harsh military applications. However, by breaking the one-to-one relationship between CPU and GPU, even greater performance gains can be made by creating clusters of two or three GPUs accessible to just one CPU via a high-speed PCI Express switch. The VPX module format, in particular, provides the capability to route PCI Express offboard, through the backplane, allowing a host CPU access to a cluster of GPUs located on VPX modules within the same chassis. Such a configuration can yield TFLOPS of performance and is embeddable within any deployable environment. Depicted in Figure 1, the NPN240 from GE Intelligent Platforms adopts this architecture to pack dual NVIDIA GT240 GPUs and DDR3 local memory onto a single rugged 6U VPX module.

Figure 1: The NPN240 multiprocessor from GE Intelligent Platforms

(Click graphic to zoom by 1.9x)

OpenCL and CUDA offer unprecedented levels of performance for the repetitive, parallel processing tasks found in advanced sensor systems. Importantly, these are software-based technologies, making them easier to develop, maintain, and port from one GPU to future generations. Looking ahead for rapid evolution, the next generations of GPU have already been announced, such as Fermi from NVIDIA. Fermi will have many more streaming multiprocessing groups, on-chip caches, 32 CUDA cores per group, more logic within each core, and full support for double precision floating-point math, suited to new high-performance, high-resolution sensor types.

To learn more, e-mail Duncan at [email protected].