Releasing the processing potential of GPUs

StoryOctober 27, 2009

High-performance Graphics Processing Units (GPUs) have evolved from fixed-function graphics execution units to SIMD processors. Now, the key to implementing GPU processing lies in a new generation of tools including CUDA, OpenCL, and more to integrate code development across heterogenous CPU/GPU architectures.

The internal computing architecture of high-performance Graphics Processing Units (GPUs) has evolved from fixed-function graphics execution units to arrays of fully programmable Single Instruction Multiple Data (SIMD) processors. This evolution has been driven by the demand from the video gaming community to perform generic physics calculations in parallel to give greater realism to the behavior of, for example, smoke, debris, fire, and fluids. The ability to offload and accelerate these same types of repetitive parallel calculations onto a GPU offers great potential to military technologies such as radar, sonar, and image processing. The key to efficient implementation is a new generation of tools such as OpenCL and CUDA, which integrate code development across heterogeneous CPU/GPU architectures along with the memory and I/O bandwidth to support them.

SIMD processing rays

At its heart, a high-performance GPU device will typically have up to 128, 32-bit single precision processor cores clocked at 1 GHz or more. These are organized as parallel SIMD arrays so that groups of processors can execute the same instructions on different data sets in parallel. When operating as a GPU, the primary requirement is to utilize animated 3D graphics functions such as shaders. However, GPUs are evolving away from being specific shader processors and are becoming more generic math processors, now referred to as “stream processors.” With the right tools, GPUs can be applied much more broadly to accelerate many kinds of PC-based applications such as genetic research, seismic processing, meteorological processing, and DSP, at much lower cost than other more specific forms of hardware acceleration.

One major GPU manufacturer, NVIDIA, has developed a software environment known as CUDA to release the potential of the GPU into these other application areas. CUDA supports the combination of CPU and GPU by allowing inline C code development through an abstracted library of functions that hides the specifics of the GPU’s stream processors and their interface with the CPU. This provides a very flexible programming interface and permits growth or even radical change of the stream processors in the future, without impacting existing code. To reduce the scope for errors, CUDA adopts a straightforward programming model that manages multiple threads internally to optimize processor utilization so that there is no need to write explicitly threaded code.

GPU without graphics

Paradoxically there will be a class of embedded applications that will not generate any local graphical output at all. Typically, this class could comprise image processing in UAVs or underwater Remotely Operated Vehicles (ROVs) or many other types of unmanned sensors. An embedded PC with GPU becomes an ideal platform for image enhancement, stabilization, pattern recognition, target tracking, video encoding, or encryption/decryption. These are all applications that can be written in regular C code to run on a high-performance PC but could be accelerated to run orders of magnitude faster by a GPU stream processor. The GPU provides generic parallel processing already integrated into many PC configurations and requires less specialized skills than, for example, an FPGA development, by using off-the-shelf tools such as CUDA, the MathWorks’ MATLAB, and ported VSIPL DSP libraries to construct, test, and verify the application.

PCI Express key to performance

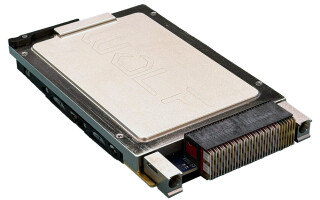

An embedded sensor processing application requires high data bandwidth to receive and process the continuous stream of incoming raw image data. The ability of CUDA to handle multithreading and consequently maximize the processing load of the GPU’s SIMD arrays is dependent on the performance of both the external interface and its local memory interface. High-end GPU devices will use 16-lane PCI Express 2.0, doubling the earlier PCI Express 1.0 data rates, to give a theoretical 500 MBps per lane. For rugged embedded applications, this fits well with both the popular 3U and 6U formats of the VPX (ANSI/VITA 46) packaging standards with their extended high-speed connectivity. The MAGIC1 rugged embedded PC from GE Fanuc Intelligent Platforms, pictured in Figure 1, is based on the 3U VPX form factor and has been reengineered and enhanced to support CUDA-capable GPUs from NVIDIA. While such an embedded PC fits comfortably within the 3U format, a 6U profile also has the real estate and greater connectivity to potentially make a new class of powerful multicomputing engine based on a number of multicore processors and GPUs using PCI Express 2.0 as the interconnect.

The GPU is evolving rapidly, creating a processing capability with broad application across many diverse markets. CUDA and similar development environments provide accessibility to this untapped performance reserve. Consequently, the rugged military and aerospace domain appears set to change the way complex, time-consuming sensor applications can be developed, tested, verified, and successfully deployed.

Figure 1: MAGIC1 is a rugged, embedded PC from GE Fanuc Intelligent Platforms, based on the 3U VPX form factor and reengineered and enhanced to support CUDA-capable GPUs from NVIDIA.

(Click graphic to zoom by 1.9x)

To learn more, e-mail Duncan Young at [email protected].