Why autonomous systems must be assured like weapons systems

StoryMay 07, 2026

In defense-related environments where mission success, survivability, and resilience are paramount, assurance must be as adaptive and persistent as the systems it supports. Autonomous platforms will only deliver their promised advantages when software assurance evolves from a one-time certification exercise into a sustained engineering discipline.

Autonomous military platforms are rapidly reshaping modern defense. By combining advanced sensors, software, and artificial intelligence (AI), these systems can operate at machine speed, extend reach, and reduce risk to human operators. At the same time, they introduce a fundamental shift in how software assurance must be approached. As autonomy increases, so does reliance on nondeterministic, data-driven behavior. The result is a paradox: Autonomy promises greater effectiveness, but only if software assurance becomes stronger, more continuous, and more systemic.

Scale and speed unattainable with traditional rule-based software

It is critical to distinguish between AI and autonomy. Autonomy is a system-level capability; that is, the ability to perform missions within defined rules of engagement, manage uncertainty, and transition safely between modes of operation. AI is one way of enabling that capability but it is not the objective itself. From an assurance perspective, this is an important distinction – safety, security, and mission effectiveness must be evaluated at the system level. While an AI model may perform well in isolation, it might still contribute to unsafe or ineffective behavior once it is integrated into control logic, sensors, and actuators.

Why AI complicates verification and certification

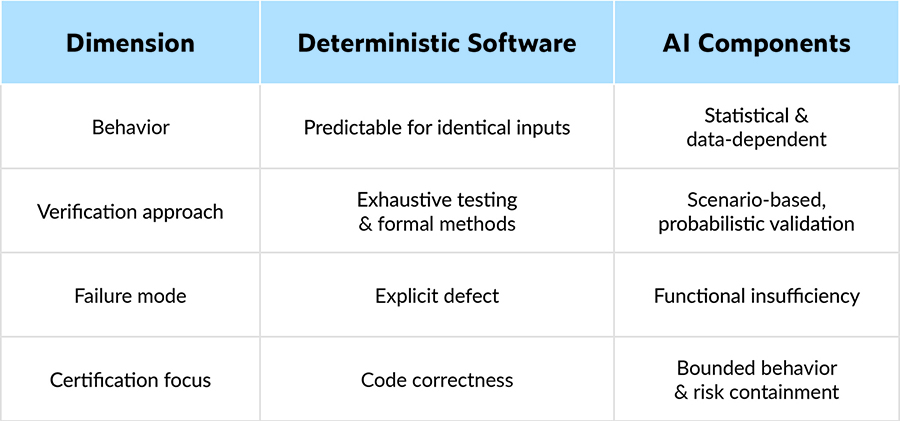

AI increases system complexity in ways that traditional verification methods were not designed to handle. Classical software behaves deterministically – given the same inputs, it produces the same outputs. Deep-learning models, on the other hand, do not, as their outputs are statistical, influenced by training data, confidence thresholds, and subtle variations in sensor inputs. Small changes in lighting, noise, or context can alter outcomes in ways that are difficult to predict exhaustively.

Such nondeterminism creates challenges for certification and assurance. It is not feasible to test all possible operating conditions, nor to prove correctness across every scenario the system may encounter. Yet defects or blind spots in an AI model can propagate into higher-level decision-making, potentially triggering unsafe actions, incorrect classifications, or unintended system responses.

Many of these failures fall into a class known as functional insufficiencies: situations where a system behaves unsafely even though no component has technically failed. Sensors operate as designed, software executes correctly, and the AI model behaves exactly as it was trained, but the outcome is still unacceptable. This situation often occurs when real-world operating conditions differ from those represented during development – whether changes in environment, context, or adversarial behavior.

These obstacles must be addressed through complementary assurance perspectives rather than reliance on any single prescriptive standard. At the system level, defense safety and mission-assurance engineering focuses on identifying and mitigating unsafe behavior that can emerge even when all components operate as designed, situations where intended functionality proves insufficient under real operational conditions.

At the AI level, assurance focuses on how AI-enabled capabilities are specified, developed, verified, deployed, and sustained across their life cycle, with explicit recognition of uncertainty, performance limits, and failure modes. This process includes understanding how models behave outside nominal conditions, how they interact with deterministic control logic, and how their outputs are constrained to prevent localized failures from escalating into mission or safety failures.

Taken together, these perspectives reinforce a core principle for military systems: safety, security, and mission assurance must be argued and evidenced at the system level, while AI components are treated as inherently uncertain contributors whose behavior must be bounded and continuously revalidated.

Guardrails as the foundation of trustworthy autonomy

AI-enabled autonomous systems depend on a broader software and hardware infrastructure that performs deterministic control of propulsion, navigation, communications, and weapons systems. Within this architecture, guardrails – software and hardware mechanisms that check, constrain, and contextualize AI outputs before they influence mission critical actions – play a central role.

Effective guardrails operate on both inputs and outputs: On the input side, these processes assess sensor health, detect missing or corrupted data, and prevent unreliable streams from influencing the model. On the output side, they enforce policy constraints, confidence thresholds, and plausibility checks to ensure that recommendations made by AI remain within safe and authorized bounds. When uncertainty exceeds acceptable limits, the system can transition to a degraded mode, invoke deterministic fallback logic, or hand control back to a human operator.

Redundancy is another proven technique. In flight and mission software, it is common to run multiple algorithms or models in parallel and compare results. Discrepancies trigger monitoring logic that can suppress unsafe actions or initiate corrective behavior. Redundant systems acknowledge that AI can fail and ensure that such failures do not translate directly into mission or safety failures.

Continuous assurance, not one-time verification

Traditional defense systems rely on extensive predeployment testing, evaluation, verification, and validation. While still essential, these practices are no longer sufficient on their own for AI-enabled autonomy. The operational environment is too dynamic, and AI behavior too dependent on data, for assurance to end at release.

Continuous assurance can address this gap. Runtime monitoring captures deviations from expected behavior, out-of-distribution inputs, confidence violations, and guardrail activations. The operational evidence feeds back into development to inform updates to AI models, guardrails, or system-level policies. Updates are then revalidated through controlled pipelines before being deployed back to the field.

In some architectures, learning occurs offline, with retrained models uploaded after validation. In others, learning may occur on platform, with redundant models operating side by side as one executes and one learns. Only after the updated model satisfies defined assurance criteria is it permitted to replace the active model. In all cases, assurance becomes a living process rather than a static milestone.

Shifting verification left, sustaining it throughout the life cycle

To make continuous assurance practical, verification and validation must begin early and scale across the life cycle, a move that requires a shift-left approach that combines multiple test strategies. Isolated testing validates individual AI components and software modules; in contrast, integration testing evaluates interactions between AI, sensors, and control logic. System-level testing assesses mission behavior, safety properties, and resilience against adversarial conditions.

Beyond functional testing, quality and security checks are essential. Static analysis can automatically enforce secure coding and safety standards such as MISRA C/C++ and CERT C/C++ as soon as code is written or modified. Regression testing ensures that incremental changes do not reintroduce defects, while coverage analysis highlights untested paths that may hide safety-critical behavior.

Modern defense systems increasingly rely on commercial off-the-shelf (COTS) components and products aligned with the modular open systems approach (MOSA). These elements must be included in the verification framework. Regression and interface testing confirm correct API usage, while robustness and security tests ensure that parameter checking and boundary enforcement prevent misuse, faults, or exploitation. (Table 1.)

[Table 1 ǀ A table compares deterministic software with AI components in military autonomy.]

Using AI to strengthen the assurance process itself

AI’s role in autonomous systems extends beyond runtime behavior into the heart of software development and verification. In practice, this starts with proven, production-grade static analysis. These tools go well beyond pattern matching into deep control-flow and data-flow analysis to identify defects that would otherwise remain hidden, including runtime errors, logic flaws, security weaknesses, and violations of safety coding standards such as MISRA and CERT.

These static-analysis engines establish a deterministic, explainable foundation for software assurance and can precisely identify where and why code violates defined rules or introduces risk. Through the use of an MCP [Model Context Protocol] server and AI agents, AI is applied after violations are identified, leveraging the MCP server’s contextual understanding of the project, the codebase, and the applicable standards. This combination enables AI agents to propose or implement fixes that are standards-compliant and consistent with the surrounding design intent, rather than producing generic or unsafe edits.

Because the MCP server maintains an intimate view of the system context – including coding guidelines, architectural constraints, and historical changes – AI-driven remediation becomes both targeted and auditable. Fixes can be routed through configurable workflows, ranging from human-in-the-loop approval to fully automated correction followed by regression testing. Every change is logged, traceable, and supported by objective evidence, thereby preserving the integrity of the assurance argument.

AI further strengthens verification by automatically generating unit tests and test cases that exercise identified code paths. These tests are used to confirm that each line of code is executed/tested as intended and that required code-coverage objectives (e.g., statement, branch, MCDC or modified condition/decision coverage) are met. Coverage analysis then feeds back into the verification process, highlighting gaps that require additional testing to support safety and mission assurance claims.

Taken together, these steps align with emerging safety frameworks that treat assurance as an evidence-based argument sustained over time. Rather than asserting safety based on checklist completion or fixed-acceptance criteria, these frameworks require credible and traceable evidence that AI-related risks are understood and responsibly managed. The objective is not to prove that AI will never fail, but rather to demonstrate what the system is intended to do, what it is not intended to do, the conditions under which it can safely operate, and how it behaves when those conditions are violated.

This evidence does not live in a single standalone artifact; it is instead integrated into existing life cycle work products, requirements specifications, safety concepts, verification and validation plans, test results, and coverage data and is maintained through bidirectional traceability. When requirements, code, or data change, the impact on safety evidence is immediately visible, enabling timely reverification and preserving the integrity of the overall assurance argument throughout the system life cycle.

Stronger assurance enables effective autonomy

AI brings undeniable advantages to military autonomous systems, but it also introduces uncertainty that cannot be eliminated, only managed. The path forward is not to reduce assurance expectations, but to elevate them. Continuous verification, robust guardrails, system-level thinking, and disciplined use of AI in both operation and development form the foundation of trustworthy autonomy.

Ricardo Camacho, Director of Product Strategy Embedded & Safety Critical Compliance at Parasoft, guides the strategy and growth of Parasoft’s software test automation solutions for the embedded safety- and security-critical market. With more than 30 years of experience in systems and software engineering of real-time systems, Ricardo is involved in promulgating standards like ISO 26262, DO-178C, IEC 62304, IEC 61508, IEC 62443, DO-326A, and more. Readers may reach the author at [email protected].

Parasoft https://www.parasoft.com/