Real-time image compositing: Software displaces hardware for radar displays

StoryJuly 08, 2008

Historically handled by specialist hardware, the display of real-time radar video with multi-layer graphics is now solved with high-performance COTS software processing and graphics platforms. Fusing multicore computing platforms with high-performance graphics chips provides enhanced performance, lower costs, and easier maintenance through common hardware components.

Military command and control displays combine radar video presentation with complex charts and overlay symbology for multi-layer display presentation. Managing the display's layers for timely updates and realistic radar presentation can present difficulties.

One approach to solving this challenge is to design special-purpose hardware display architectures that support multiple graphics layers. However, developments in COTS processing and Graphics Processor Units (GPUs) have enabled multi-layer displays to be implemented in software without compromising the quality of the display presentation. The new solution allows the compositing of multiple windows of radar video, along with underlay charts and overlay symbology, all updated at different rates and with minimal interaction between the display layers. An example of this is shown in Figure 1, which depicts a combination of underlay, radar, and overlay.

Figure 1

(click graphic to zoom by 2.2x)

Standardized hardware platforms

A modern visualization solution for real-time data will employ all-digital processing and display. Sensor data may be supplied directly in digital format – commonplace for modern sensors – or may be converted from its analog legacy interface through a processing server. Standard Ethernet networks provide a cost-effective interconnect from sensors or processing servers through to multiple display clients, possibly incorporating video compression where the number of channels is large or network bandwidth is at a premium.

With the real-time video arriving at multiple display clients, it is highly desirable that the client architecture minimizes the need for special-purpose hardware such as a radar scan converter. These products have provided an excellent method for radar scan conversion and graphics mixing using innovative techniques, including multi-layer frame stores and video keying. However, if the solution to presenting combined graphics and sensor video could be achieved through software and standard processing and display hardware, the resulting client displays would be more cost-effective, smaller, and flexible. Furthermore, with the inevitable progression in processing and graphics architectures, software solutions support enhanced and evolving capabilities. In contrast, hardware solutions tend to solve today's problem and offer limited growth or expansion potential.

The need for specialized hardware in clients has tended to limit the capability of a console to reconfigure its role or evolve to new requirements. Often the hardware defines the function, and changes to the function will necessitate changes to the hardware. Furthermore, reduced hardware translates directly to lower maintenance costs and higher system reliability. Where the hardware has industry-standard computing and graphics hardware, the additional cost benefits and ease-of-upgrade serve to further reduce the lifetime cost of deployment.

Display presentation

The problem of displaying radar sensor video with graphics underlays and overlays is summarized in Figure 1, which shows a logical layering of graphical data with respect to the radar so that some graphics constitute underlay and others the overlay. The distinction of underlay, radar, and overlay in this manner is relevant only in defining the method of presenting the three elements.

The underlay element is likely a complex map composed of many logical layers that build up to form a naval chart. The method of combination requires the radar video to be mixed with the underlay element in a form of cross-mixing, in which pixel color depends on a combination of the graphics color and the radar color. In contrast, graphics drawn in the overlay element will be displayed in preference to any radar or underlay color at that point. It is only if the overlay presents a "transparent" color that the combined radar and underlay show through.

A convenient language and algebra for the description of image combination are provided by Porter and Duff in their seminal work on compositing digital images[1]. They describe the operations for combining images using logical operations, including blending, which they call the over operation. Applying this terminology to the display of radar video, we can define a combination rule that combines an overlay A with radar R and underlay B as:

Display = A over (R over B)

The over operator has an associated alpha component, which defines the degree of transparency in the combination of two pixels. For the R over B operation, the combined radar and underlay graphic is computed from an alpha value that is computed for each display pixel to define the relative weighting between the two elements. This per-pixel alpha value has the requirement to leave the underlay graphics unaffected in the absence of radar, and otherwise display a proportional blend of the radar and graphics color.

The final A over operation demands an alpha value for A of 1 where the pixel is nontransparent and 0 where it is transparent. This implements a color-keyed overlay, where the combination ensures that the radar and underlay only appear when an overlay pixel is transparent. Figure 2 depicts an example where a set of three simple geometric shapes illustrates the combination effects. The combination of the three elements is a real-time process that needs to update the screen to provide smoothly updating graphics and radar video display.

Figure 2

(click graphic to zoom by 2.0x)

Finding a software solution

A number of developments in COTS hardware have enabled real-time software compositing to become a reality. First of all, the development of multiprocessor architectures with mainstream multicore processors provides enormous data processing capability. By designing the software to exploit the multiple processing elements, display-related processing can be allocated to one or more cores with minimal impact on other application processing.

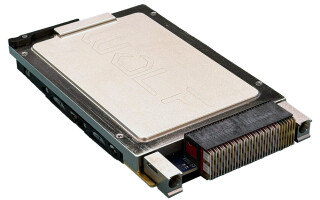

The second development is in modern graphics card performance and architecture. Driven by the seemingly unlimited demands of gaming and simulation, the latest generation of graphics cards provides its own processing capability that serves to offload the main processor and accelerate graphics operations so they run entirely within the GPU's processing and memory subsystem. Significantly, the combination of a multicore processing platform and high-performance graphics subsystem is now an established computing platform available in ruggedized military form. One example is GE Fanuc Intelligent Platforms' MAGIC1 rugged display (see Figure 3).

Figure 3

(click graphic to zoom by 2.2x)

Practical considerations

A practical implementation of a software-based, real-time image compositor has to do more than provide compositing functions for display elements. It has to work with the constraints of application software, third-party display toolkits, and graphics drivers. In the example shown previously, the underlay map comprises many display layers that may be constructed from a combination of raster and vector components. Such displays are commonly managed by third-party display toolkits, which handle complex display presentation. These toolkits are layered on top of the target computer's native window system, for example, Microsoft Windows or the X Window System for Linux and Solaris.

Application software that employs a toolkit for charting displays needs a radar scan converter and related display processor to present radar video in the layered method described previously. However, the interaction of the radar presentation and the graphical display toolkit needs to be minimal. The desired goal is that the display's graphical component can be managed independently of the radar layer, allowing the low-level graphical libraries to provide the necessary display combinations. Some situations may demand that the radar processing and display functions are handled in a different processing context (in UNIX terms, a process), providing protection against software failures.

Exploiting a software framework

Cambridge Pixel's SPx software framework provides a modern implementation of radar processing, distribution, and display that fully exploits the capabilities of modern processing and display hardware to offer multilayer compositing of real-time data (see case study, next page).

Sidebar 1

(click graphic to zoom)

The software provides a capability to render complex, multi-layer graphics applications under both Microsoft Windows and X11 operating systems, supporting true multi-layer displays. The radar layer is updated at regular intervals to provide smooth sweep updates and realistic fading effects. The underlay graphics are mixed with the radar video, and the result is overlaid with another graphics layer containing symbology and track data. The software cleanly separates the radar scan conversion from the display processing, optionally allowing these functions to run on different machines.

An example of the performance achieved can be seen with a complex multi-layer underlay map (an S57 naval chart with 62 thematic layers, 1,400 point features, 4,000 line features, and 1,200 polygons), along with a real-time radar display (500 Hz prf, 4 second scan time). The radar display is updated at regular intervals of 20 ms to provide a highly realistic radar display sweep in 256 levels of radar color. Finally, the map and radar are overlaid with a range ring and target symbology that provides the overlay layer. This complete scenario runs on a rugged desktop computer with a high-resolution display of 1,600 x 1,200 x 32 bits per pixel and a Core 2 Duo platform (2.13 GHz processor) with an NVIDIA 7900 graphics card. The typical CPU/GPU load level is less than 10 percent, leaving ample resources for the application software.

SPx software provides flexibility in the display architecture, allowing the scan conversion of the radar video (the conversion from polar to screen) to be handled separately from the display processing, including the option to separate the function across a network. This allows centralized scan conversion of the radar video and distribution of incremental wedges of new data to the display consoles where the video is composited with the graphics layers. The software is easily reconfigured to move the scan conversion into the display console, distributing the polar video across the network if that configuration is preferred.

Industry standard provides best solution

By eliminating all special-purpose hardware from processing and display functions, industry-standard hardware and software components mean cost-effective initial deployment and an open-standards upgrade path for maintenance, repair, and upgrade. A network-based implementation shows radar video distribution from a central acquisition point through a display processor to a number of display consoles. The solution employs only industry-standard computing and display components to present the real-time, multi-layer display.

David Johnson is technical director at Cambridge Pixel. He holds a BSc electronic engineering degree and a PhD in sensor technology from the University of Hull in the UK. He has worked extensively in image processing, radar display systems, and graphics applications at GEC, Primagraphics, and Curtiss-Wright Controls Embedded Computing. He can be reached at [email protected].

Cambridge Pixel Ltd.

St John's Innovation Centre

+44 (0)1763-260184

www.cambridgepixel.com

References:

1. "Compositing Digital Images" T. Porter and T. Duff, Computer Graphics, Vol. 18, No. 3, 1984, 253-259.

Case study: Naval distribution for Frontier Electronic Systems

Radar video acquired from a number of sensors onboard a ship can be distributed using a product such as Frontier Electronic Systems (FES) LAN Radar Data Distribution System (LRADDS). Accepting a number of naval formats for radar input, LRADDS provides full bandwidth video on a standard Ethernet network for distribution and processing (see figure).

Cambridge Pixel’s SPx radar processor, which uses only industry-standard computing platforms and display components, is capable of accepting LRADDS data and providing clutter processing and display enhancement capabilities before scan converting the data to a PPI display format. The scan conversion can either occur on the display client or, offering the benefits of distributing the processing load, can run on a dedicated radar video server. The server’s output is the continually updating radar image, which is distributed to the display consoles for software compositing and display. The console provides a standard X Server for presentation of the underlay and overlay graphic components, with a client application managing the graphics independently of the radar video.