Zero trust for military embedded systems

StoryFebruary 11, 2022

A zero-trust security posture assumes every user and device is untrusted, even if it is located within the protected perimeter of the local network. The concepts of such perimeterless security have been around for more than a decade, including the “black core” in the architectural vision of the U.S. Department of Defense (DoD) Global Information Grid. Integrators of embedded systems who must have the highest levels of security for such applications as electronic warfare (EW) warning systems can layer higher-level security – such as advanced analytics – atop the embedded computer’s real-time operating system (RTOS) to complete the zero-trust architecture.

In the commercial world, Google was one of the first companies to implement such a perimeterless security with their BeyondCorp security model starting in 2009. Yet, until recently, the military has been slow to adopt and implement zero-trust architectures.

All that changed in early 2021. In February, the Defense Information Systems Agency (DISA) published the Department of Defense (DoD) Zero Trust Reference Architecture¹. Two months later, the DoD Chief Information Security Officer, David McKeown, described plans to create a zero-trust portfolio management office that would provide critical centralization and orchestration for the department’s move toward the advanced cybersecurity architecture². Then in May 2021, Executive Order 140281 on improving the nation’s cybersecurity₃ directed each federal agency, including each military department, to develop a plan to implement zero-trust architecture.

The DoD program often cited as designed for zero trust from the beginning is the Air Force’s Cloud One program: Even though that example is a cloud hosting service, zero trust can also apply to military embedded systems. From one viewpoint, an embedded system can be thought of as an appliance on a larger network. Consequently, hardening the embedded system increases the overall security of the network. From another viewpoint, military embedded systems with high functionality often have an internal network connecting various subsystems and can implement a broad set of zero-trust concepts.

Zero-trust motivation

The motivation for implementing a zero-trust architecture stems from the increase in network breaches for both public and private enterprises. Traditional cybersecurity architectures attempt to establish and defend a network perimeter around a trusted computing environment using multiple layers of loosely connected security technologies. Contemporary threat actors, ranging from cybercriminals to state-funded hackers, have demonstrated the ability to breach the network perimeter defenses. At that point, they are free to move laterally over the network to other systems and gain largely unfettered access to systems and the data and algorithms contained within.

Beyond being susceptible to external threat actors, perimeter defenses do not protect well against internal threats (Figure 1). Malicious insiders can carry out fraud, theft of data and intellectual property (IP), and sabotage, which can include modifying or disabling functions, installing malware, and creating back doors.

[Figure 1 | A perimeter defense does not protect well against stolen credentials or insider threats and is also vulnerable to sophisticated hackers.]

A zero-trust approach shifts the emphasis from the perimeter of a network to the discrete applications and services within a network, building more specific access controls to those specific resources. Those access controls match the identity of users and devices to authorizations associated with those users and devices to ensure access is granted appropriately and securely⁴.

Security environment in military embedded systems

Most modern embedded systems are connected to a network, making them vulnerable to attack. This increasingly applies to military embedded systems, such as connecting to a cloud for data analysis or downloading new waveforms while on a mission. In addition to network-based attacks, there are also attacks during maintenance and insider attacks, including supply-chain attacks. Yet many embedded systems have only loose security postures, primarily because of the perceived cost to implement stricter security. When security is implemented, it is almost always perimeter-based security at the edge of the embedded system. That can be as simple as a user ID/password combination that can grant broad admin-level privileges or something slightly more sophisticated like a firewall. Embedded systems can benefit from a zero-trust architecture, but they have two significant differences from enterprise environments that impact the security solution.

The first big difference is that embedded systems have a stable set of subjects (applications), devices (embedded processors), and communication paths. Adding new applications or devices is rare, and even upgrading applications or replacing failed devices happens infrequently. The result is that the system integrator can lock down the system configuration – not just the hardware and the operating system (OS), but also the middleware and applications. Scheduling execution of trusted applications and specifying approved communications paths both can be defined statically in a configuration file used at boot time. Dynamic integrity testing is still advised to detect if any software component gets altered.

The second significant difference is that many embedded systems operate autonomously or with a minimal number of users and roles. This results in two broad implications for secure operation. First, minimal administrative functionality is needed to support the system. Second, additional assurance measures are inherently required to ensure the highly robust autonomous execution of the security management functions⁵.

These differences in environment and use cases enable embedded systems to tailor the security solution more easily than in an enterprise environment.

Zero-trust principles

A zero-trust architecture moves away from the default policy of “trust but verify” for entities inside a perimeter defense to a policy of “never trust, always verify” for all entities. Every user, device, application, and data flow is verified, whether inside or outside a traditional network boundary. The other core concept of zero trust is the principle of “least privileged” to be applied for every access decision. Common to many security approaches, the principle of least privilege calls for a subject to be given only the minimum access level required to perform a given task.

The National Security Agency (NSA) defines three guiding principles for zero trust⁶, and the DoD Zero Trust Reference Architecture1 adds another for monitoring and analytics:

1. Never trust – always verify: Treat every user, device, application, and data flow as untrusted and potentially compromised, whether inside or outside a traditional network boundary. Authenticate and authorize each one only to the least privilege required to complete the task.

2. Assume breach: Consciously operate and defend resources with the assumption that an adversary already has breached the perimeter and is present in the network. Deny by default, and scrutinize every request and requestor.

3. Verify each action explicitly: Use multiple attributes (dynamic and static) to derive confidence levels for contextual access decisions to resources.

4. Apply unified analytics: Apply unified analytics for Data, Applications, Assets, and Services (DAAS) to include behavioristics, and log each transaction.

Separation kernels for embedded security

A well-accepted foundation for military embedded security is the use of partitioning to run applications in separate, isolated partitions. Apart from microcontrollers and digital signal processors, most CPU chips used in embedded systems include a memory management unit (MMU) that can be used for hardware-enforced partitioning of memory for different applications. Although that MMU can be controlled by a general-purpose OS or an industrial real-time operating system (RTOS), the most secure systems use a separation kernel. A separation kernel is a specific type of microkernel where the essential functions running in privileged kernel mode are only the most critical security functions.

A separation kernel is a minimized OS kernel whose only function is to enforce four basic security policies: data isolation, fault isolation, control of information flow, and resource sanitization⁷. All other OS services are moved to user space so that the code size and attack surface of kernel-mode code is the absolute minimum (Figure 2). One way to think of this is to apply the principle of least privilege to the OS itself. Software components with the largest attack surfaces and the most vulnerabilities, such as the networking stack, file system, and even virtualization, can execute sufficiently in user mode, so the security policy should not allow them to execute in privileged kernel mode.

[Figure 2 | With a separation kernel, the OS services, middleware, and applications run in isolated partitions and only explicitly permitted, preconfigured communications occur between them.]

A less secure alternative to a separation kernel is a hypervisor, which adds virtualization to the kernel code to increase virtualization performance at the expense of a larger attack surface. That more extensive code base is much harder to secure, let alone prove it is secure.

Mapping separation kernel attributes to zero-trust principles

The fundamental security policies enforced by a separation kernel – data isolation, fault isolation, control of information flow, and resource sanitization – map well to zero trust principles. Layered security extensions in the OS services provide additional capabilities. (Table 1.)

[Table 1 | The fundamental security policies enforced by a separation kernel map well to zero-trust principles.]

Zero-trust architectures for embedded systems

The National Institutes of Standards and Technology (NIST) Special Publication 800-207 “Zero Trust Architecture”⁸ describes a general architecture that uses a policy engine, a policy administrator, and a policy enforcement point (PEP) as the core of zero trust (Figure 3). The policy engine makes the decision to grant, deny, or revoke access to the resource. The policy administrator executes the decision by establishing or shutting down the communication path, generating any session-specific authentication and authentication token or credential used by a client to access an enterprise resource. The PEP is in the data path of resource access and is responsible for enabling, monitoring, and terminating connections between a subject and an enterprise resource. In some implementations, the PEP is divided into a client-side agent and a resource-side gateway.

[Figure 3 | Core zero-trust logical components (NIST SP800-207 figure 2).]

In practice, zero-trust architectures often segment individual resources or groups of resources on a unique network segment protected by a security gateway that acts as the PEP. Segmenting network traffic reduces the attack surface and makes it harder for the adversary to move laterally through the network.

Micro-segmentation for data security

Micro-segmentation is the deployment of a virtual firewall at every single virtual network interface where network traffic enters and leaves each virtual machine. The firewall residing on each virtual machine isolates every network resource and endpoint from each other even when residing on the same subnet⁹.

Micro-segmentation is an essential part of moving from a network-centric approach to data-centric security. It works at a more granular level to protect DAAS by creating policies that limit network and application flows between workloads to those that are explicitly permitted. It does this by segmenting users, applications, workloads, and devices based on logical, not physical, attributes¹. Implementing microsegmentation early in the zero-trust process follows the NSA’s advice⁶ to first focus on protecting critical DAAS before securing all paths to access them. The smaller virtual network segments also present a reduced attack surface.

Applying this to embedded systems, a separation kernel directly supports microsegmentation. Microsegmentation uses lists of explicitly permitted network and application flows between workloads, sometimes called “access lists” or “allow lists.” If a connection is not explicitly stated, it is denied by default. A separation kernel implements such an access list in a static configuration file that is loaded when the kernel is booted. No application or other software running in user space can be given a level of privilege that can change the configuration file or circumvent the access list.

Device application sandboxing

NIST SP 800-207 describes different variations of a zero-trust architecture, including a VM-based variation called “device application sandboxing” (Figure 4). In that scenario, vetted applications run compartmentalized on assets, where the compartments could be virtual machines, containers, or some other implementation. The goal is to protect each application from a possibly compromised host or other applications running on the asset. The applications can communicate with the PEP to request access to resources, but the PEP will refuse requests from other applications on the asset.

[Figure 4 | Device application sandboxing (NIST SP800-207 figure 6).]

The fundamental property of a separation kernel is to isolate applications in hardware-enforced partitions. Those partitions align with the “sandboxes” and “compartments,” fulfilling the goal to protect the application or instances of applications from a possibly compromised host or other applications running on the asset. A separation kernel offers even more security by providing a secure, trusted base upon which each application can rely. The ideal separation kernel is one that is certified to host a mix of trusted and untrusted applications at mixed security assurance levels.

Separation kernel, layered OS for security

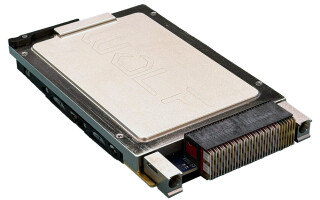

The INTEGRITY-178 tuMP high-assurance RTOS from Green Hills Software is a secure separation kernel and layered OS services that can be a solid foundation for a zero-trust architecture. INTEGRITY-178 tuMP enables the data isolation, control of information flow, resource sanitization, and fault isolation required for a separation kernel of high robustness.

In 2008, the INTEGRITY-178 RTOS became the first and only operating system to be certified against the NSA-defined Separation Kernel Protection Profile (SKPP)10. The certification against the SKPP was to both NSA “High Robustness” and Common Criteria EAL 6+, and it included a formal proof of correctness for the separation kernel11. That certification also included penetration testing and covert channel analysis by the NSA. Green Hills Software’s latest RTOS version for multicore processors, INTEGRITY-178 tuMP, still meets the SKPP’s rigorous set of functional and assurance security requirements for those customers needing it.

System integrators can layer higher-level security, such as advanced analytics, on top of the RTOS to complete the zero-trust architecture.

References

1. Defense Information Systems Agency, “Department of Defense (DOD) Zero Trust Reference Architecture, Version 1.0” (Feb. 2021). https://dodcio.defense.gov/Portals/0/Documents/Library/(U)ZT_RA_v1.1(U)_Mar21.pdf

2. “The Pentagon’s next move in expanding zero trust,” C4ISRNET (15 April 2021).

https://www.c4isrnet.com/cyber/2021/04/15/the-pentagons-next-move-in-expanding-zero-trust/

3. Exec. Order No. 14028, Executive Order on Improving the Nation’s Cybersecurity, 86 Fed. Reg. 26633 (17 May 2021).

4. K. DelBene, et al, “The Road to Zero Trust (Security),” Defense Innovation Board (9 Jul 2019).

5. T. Nguyen, et al, “High Robustness Requirements in a Common Criteria Protection Profile,” Proceedings of the Fourth IEEE International Information Assurance Workshop, Apr 2006.

6. National Security Agency, “Embracing a Zero Trust Security Model” (Feb 2021). https://media.defense.gov/2021/Feb/25/2002588479/-1/-1/0/CSI_EMBRACING_ZT_SECURITY_MODEL_UOO115131-21.PDF

7. W. Mark Vanfleet, et al, “MILS: Architecture for High Assurance Embedded Computing,” CrossTalk (Aug 2005).

8. National Institute of Standards and Technology, Special Publication 800-207: Zero Trust Architecture (Aug 2020).

https://csrc.nist.gov/publications/detail/sp/800-207/final

9. National Security Agency, “Segment Networks and Deploy Application-Aware Defenses” (Sep 2019).

10. National Security Agency, U.S. Government Protection Profile for Separation Kernels in Environments Requiring High Robustness, Version 1.03. (29 July 2007).

11. P. Huyck, “Safe and Secure Data Fusion – Use of MILS Multicore Architecture to Reduce Cyber Threats,” 2019 IEEE/AIAA 38th Digital Avionics Systems Conference (DASC), 2019.

https://ieeexplore.ieee.org/abstract/document/9081638

12. “Raise the Bar: Demanding Cybersecurity Excellence for Cross Domain Solutions in the Battlespace,” Modern Integrated Warfare. (3 Mar 2021). https://www.modernintegratedwarfare.com/space-and-cyberspace/raise-the-bar-demanding-cybersecurity-excellence-cross-domain-solutions-battlespace/

Green Hills Software · https://www.ghs.com/