High-definition sensors drive video compression

StoryFebruary 20, 2012

With the limited bandwidth of Ethernet, H.264 compression has become a 'must have' when streaming real-time video with high-definition sensors.

Multispectral electro-optical sensing plays a pivotal role in the detection of threats and movements of insurgents, terrorists, and other destabilizing forces operating with limited technology capability. Video is gathered from surveillance platforms, such as Unmanned Aerial Vehicles (UAVs), helicopters, or ground vehicles, which must then be analyzed and disseminated throughout the battlefield command structure as quickly as possible. Ethernet is the medium of choice for streaming video, but with its potentially limited bandwidth, real-time video compression is essential for the new breed of high-definition sensors or where many channels of video are to be carried.

Communications

Surveillance platforms carry diverse types of sensor such as HDTV, regular TV, infrared, low light, and custom. Payloads also vary as each sensor platform does not have the space, endurance, electrical power, or cooling to support all sensors concurrently. Whichever kind of platform is deployed, wireless data links convey images to where they are needed for each specific mission. Typically, mobile sensor platforms will use either SATCOMs or digital data links to stream video. SATCOM is most often supported by large air and ground vehicles, whereas smaller platforms rely on air-to-ground digital radio channels with limited bandwidth.

Compression standards

The most commonly used compression standards are JPEG 2000 and H.264/MPEG-4. JPEG 2000 was developed for the compression of still images, but is also used for streaming video by transmitting consecutive images at video frame rates. As a result, JPEG 2000 recovers any potential transmission data losses on the next frame, whereas some H.264 image integrity can be lost in the same circumstances. But this is recovered over a small number of subsequent frames, plus complete images are transmitted periodically. H.264 has become the standard for Internet applications and HDTV, offering low latency and twice the compression rates of MPEG-2. Typically H.264 can achieve up to 100 times compression, whereas JPEG 2000 achieves 30.

Video distribution

In addition to surveillance vehicles, streaming video over Ethernet using H.264 can replace many cumbersome and inflexible video distribution systems, wherever multiple video sources are to be distributed, switched, and shared between many display positions. Typical applications can be found in naval combat systems, ground forces’ surveillance vehicles, helicopters, and security installations plus many areas of training, simulation, and recording.

Implementation choices

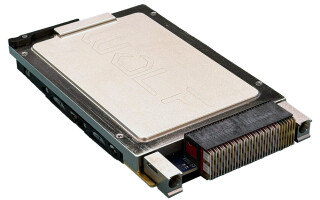

H.264 compression is very processor intensive, specifically for HDTV or where low latency is needed, whereas decompression is much less rigorous. As H.264 is now such a common standard, there are many technology choices for its implementation. Software, IP cores, ASICs, and Digital Media Systems-on-Chip (DMSoCs) are all available. However, these alone do not offer the flexibility needed to deal with multiple channels of differing formats and evolving requirements of multispectral, multisensor platforms. Typically, a flexible and efficient design solution would be to use an FPGA for video capture and reformatting and DMSoCs for the intensive Discrete Fourier Transform (DFT) processing required by H.264. This architecture is used by the rugged DAQ8580 multichannel video compression subsystem from GE Intelligent Platforms supporting two HDTV channels or four regular channels with Ethernet output (Figure 1).

Figure 1: DAQ8580 multichannel video compression subsystem from GE Intelligent Platforms

(Click graphic to zoom by 1.9x)

Military applications for video over Ethernet are necessarily more demanding than the many devices, appliances, and terminals in everyday use. As well as extremes of environment, sensor platforms will continue to mix state-of-the-art sensors with legacy equipment, highlighting the need for more flexibility and performance. Users will demand better and faster threat detection capability, perhaps achieved by combining image preprocessing, target detection, tracking, and compression functions into future video processing subsystems.

Final farewell – or farewell finally

This is my final column for Military Embedded Systems, as I have reluctantly decided to bid farewell to paper, pencil, laptop, and cell phone in exchange for a new life of self-indulgent leisure. I have been privileged to have played my part in the development and rapid growth of the rugged embedded computing industry during the past 45 years. I wish all my ex-colleagues and many friends the very best for a long and bright future. Editor’s note: Though we’re sad to see Duncan move on, the Field Intelligence column will continue in this magazine. Find out who the new author is in our next edition.

To learn more, e-mail Duncan at [email protected].