Managing the military’s big data challenge

StoryAugust 04, 2020

With every second that ticks by, the amount of data gathered by the U.S. military grows, as does the desire and need by the U.S. Department of Defense (DoD) to extract and use this data to form actionable intelligence. This situation directly results in an intense demand for military technology manufacturers to quickly produce both software and hardware capable of first processing the zettabytes of data that exist on Internet of Things (IoT) devices and then accurately analyzing its value. Successful gathering, processing, and analyzing will effectively change warfare as it is understood today.

The digital age, the age of information, the computer age – all are terms used as identifiers to depict how the 21st century has brought with it nearly unfathomable amounts of data. Knowing that the information is there and how to access it is one thing, but making sense of it and using it for both defense and commercial purposes is what makes it so-called big data.

The challenge for both warfighters and the manufacturers that provide them with their technology: Acquiring that data in contested environments and relaying it in an efficient way. Making this task more difficult is the reality that conflict often occurs in data-denied areas, making it near-impossible for these systems to access the information the military wants or doesn’t yet know it needs.

Big data and the military

“The accessibility of data in-theater is a key challenge for advancing big data utilization in the military,” says Chris Solan, chief offering manager at National Instruments (NI – Austin, Texas). “Operational security, hardening against vulnerabilities, and ultra-high reliability can factor in driving designs incorporating autonomous isolation with little connection to the outside world, which are factors at odds with the free-flowing world of big data. However, there are many creative ways to utilize big data while maintaining autonomy and protections of deployed assets. For instance, at NI, we are investing in our digital ecosystem to enable the linking of data produced through design, production, and field testing of systems so that data can be collected and analyzed outside data-poor environments.

“Big data is a broad term with applicability across many areas of interest to the military,” Solan states. “We see a clear trend of military requirements directing the use of big data throughout the life cycle of new procurements and the modernization of legacy systems. As new sources of data develop and novel uses for data emerge, big data growth will continually outpace utilization in all industries, including the military. We don’t see this as a limitation, but rather a forcing function that will drive innovation.”

“While it’s true that commercial applications and game systems have acknowledged this challenge and apply big data in creative ways, these industries do not operate the in life-and-death environments that warfighters deal with daily,” says Dr. Scott Neal Reilly, senior vice president and principal scientist at Charles River Analytics (Cambridge, Massachusetts).

“In particular, systems that are learned from data instead of designed have the risk of having limitations based on limitations of the available data that can lead to inappropriate responses in novel situations, and it isn’t clear which systems are vulnerable to what kinds of novelty,” Reilly asserts. “Developing data-driven systems that can be validated to the extent necessary for deployment in real-world, military contexts is a major challenge for the community.”

A good starting point for overcoming the problems presented by using big data in military applications is to understand what exactly makes the data so big in the first place.

The Five Vs

The Five Vs of big data – akin to the who, what, where, when, and why of journalism – constitute its meaning. Volume, velocity, variety, veracity, and value act as the road map for taking full advantage of the digital memory that exists across global databases. The last of the Vs, value, is used to further emphasize that without the capabilities to structure that data, it’s essentially useless.

“We agree that collecting sufficient volumes of data (the first V in the big data model) is essential but will provide no valuable outcome without the other Vs in the chain,” Solan says. “When it comes to the second V, velocity, the definitions of ‘timely’ and ‘efficient’ can vary greatly, depending on the particular application.

Some applications will require real-time processing of data during the execution of a process, while in other cases, timely and efficient may mean getting results within hours or days instead of the months or years it might take for a human to manually determine. NI is focusing on software-enabled automation combined with reusable and configurable system architectures to greatly reduce the time and effort required to transform collected data into actionable information.

“Moreover, our driving big data philosophy is to use software automation to drive velocity through the remaining Vs – variety, or automating the association and linking of disparate data sources; veracity, or automating data cleansing and statistical significance throughout the processing chain; and value, or converting the initial volume of data into useful outcomes by enabling advanced analytics,” he adds.

The roles of AI and ML

Artificial intelligence (AI) and machine learning (ML) play pivotal roles when it comes to analyzing the value of collected data. Sifting through the seemingly endless sea of digital information is a task that has proven too arduous for the human warfighter, but one that can be completed in seconds with an AI-powered algorithm.

“The DoD AI strategy is prioritizing systems that reduce cognitive overload and improve decision-making,” says Michael Rudolph, aerospace and defense industry manager for MathWorks (Natick, Massachusetts). “In order to speed up that OODA [observe, orient, decide, act] loop, AI needs to make earlier predictions and identify emerging issues from a variety of data sources. With big data in AI, the question is not just how much data but one of data and feature quality.” (Figure 1).

[Figure 1 The evolution from artificial intelligence to deep learning, considered in terms of computer performance over time. MathWorks chart.]

Big data has been called the oxygen of the ML revolution and military intelligence-gathering. The capabilities are designed to supplement each other with the intent to provide warfighters with mission-critical, timely information at the tactical edge.

“Highly successful predictive analytics and machine learning applications are data-hungry, require years to develop, and can amass substantial code complexity in the first operational version,” says Robert Hyland, director of program transition and principal scientist at Charles River Analytics. “We see significant promise in new programming techniques such as probabilistic programming languages (PPLs) that feature data set probability distributions as first-class programming features, among other programmer affordances.

“PPLs also help reduce the size and complexity of predictive systems,” Hyland continues. “For example, the United Nations ML system for seismic monitoring required $100 million over several years to develop 28,000 lines of code. Its successor, NET-VISTA, was implemented to peform the same ML function but only required approximately 25 lines of code written in an early PPL, and it cost $400,000.”

Capabilities and advancements like this UN example showcase the symbiotic aspects of big data and ML/AI relationships.

“The capability to effectively do this relies upon big data – data generated by the models and simulations that define the expected behavior, data observed in each component during production testing, and data collected from actual field operating conditions and failures experienced,” Solan notes. “Transforming this vast data into meaningful prognostics can require ML for certain functions, such as learning cause-and-effect relationships to automatically refine forecasts and alerts.”

Analyzing text data

Military intelligence often comes from textual sources, whether smartphone messages, email, social-media posts, documents, and the like. Analyzing this data for keywords, group behavior, and actionable intelligence is a task that is often beyond a human analyst (Figure 2).

[Figure 2 | Analyzing military intelligence data for actionable information is often beyond the capabilities of human operators, thereby driving the need for improved artificial intelligence and machine learning methods. Shown: Operations at U.S. Army Cyber Command (ARCYBER) headquarters, Fort Belvoir, Virginia. Photo by Bill Roche.]

“One important type of unstructured military data is textual documents – like reports, manuals, and websites,” says Dr. Terry Patten, principal scientist at Charles River Analytics. “This data was typically generated for human consumption and is very difficult for machines to process because words often have several meanings and there are many different ways of saying the same thing. Determining the intended meaning typically requires looking at the context of words in the sentence and the context of the document as a whole. However, there is so much valuable information trapped in textual documents that it is critical to find ways to extract it automatically. Charles River Analytics is developing an approach to extracting meaning from text that is based on sociolinguistic theory and cutting-edge, constraint-solving technology.”

Software companies are taking the brunt of the operational challenges of structuring big data. While hardware manufacturers surely face processing obstacles, having the responsibility for the algorithm that makes sense of the information being processed is an entirely different request.

“We’ve been focused on building out a variety of tools for different types of unstructured data,” says Seth DeLand, data science product manager at MathWorks. “We recently added a new product, Text Analytics Toolbox, that focuses on the analysis and understanding of unstructured text data. Our customers are using it in applications such as sentiment analysis of social-media data, predictive maintenance using mechanic logs, and topic modeling to summarize large collections of text.”

Even with these advancements, engineers know that the big data problem remains. But the drive to borrow commercial innovation and bring it to the tactical edge is more prevalent than ever, making the big data problem more of a big data challenge.

SIDEBAR

Big data, military sensors, and OpenVPX

The military sensor chain generates vast amounts of data – information flows in from ISR [intelligence, surveillance, and reconnaissance] and electronic warfare (EW) tools, with input from signals and communications intelligence activities. The amount of data is so overwhelming that it becomes impossible for human operators to sift through it all.

“If you think about the way that sensor technology continues to advance in the military, there’s exponentially increasing amounts of data,” says Shaun McQuaid, director of product management at Mercury Systems (Andover, Massachusetts). “Whether that’s images with higher pixel counts or video data or electromagnetic (EM) spectrum and capturing larger and larger bandwidths of that simultaneously, at the end of the day all of these sensors actually produce a big data problem for our platforms.”

“There’s always more data available,” McQuaid continues. “And I think it’s becoming increasingly clear that trend is not going to end any time soon. The more that you look at sensors and how they’re growing, the more that you focus on things like pixel count on cameras or infrared imaging. With the amount of work that’s done in the EM spectrum, from defending against electronic attack to jam or spoof or whatever the case might be, the amount of data that’s necessary in order to operate effectively, and the amount of communication that has to happen, is only going to increase. I think that there’s no doubt that the direction we’re going, from a data perspective, is definitely upwards. That’s the trend, I think: more and more and more.”

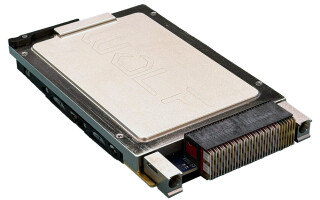

To facilitate that the glut of information, McQuaid and his colleagues are taking the data center concept used in the commercial world and adopting it for use in military applications via solutions based on the OpenVPX architecture (Sidebar Figure 1).

[Sidebar Figure 1 | The EnsembleSeries GSC6206 NVIDIA Turing Architecture GPU Coprocessor Engine with hardware-enabled support for artificial intelligence (AI) applications is aimed at use in advanced C4I processing, autonomous platforms, and smart missions. Mercury Systems photo.]

“We’re actually using the data center – so same processes, same fabric, same software, same tools, same everything – uncompromised and putting it into the OpenVPX format,” says John Bratton, product marketing director at Mercury Systems.

The data center is brought to the warfighter because they can’t build it themselves, McQuaid says. “[It] gives them the ability to extract actionable information from that big data stream and provide it in a timely manner to the folks on the platform if it’s manned, or the operators if it’s unmanned, in order to make decisions in real time.”

OpenVPX has built-in advantages for the data center application: “One is that the open architecture of OpenVPX, and investment in those open standards, means that technology refresh can happen much more quickly and in a much more agile manner than they used to in the past,” McQuaid says.